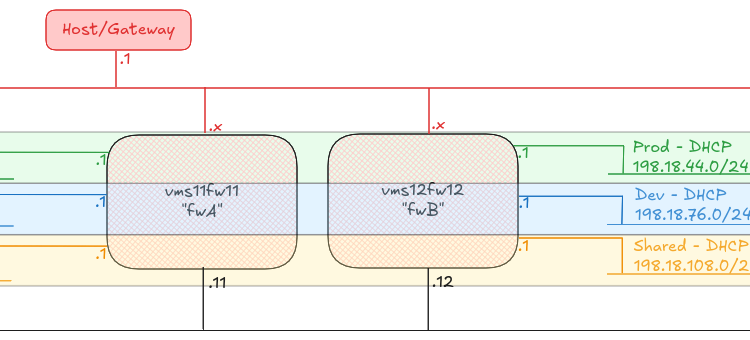

For “reasons”, at work we run AWS Elastic Kubernetes Service (EKS) with our own custom-built workers. These workers are based on Alma Linux 9, instead of AWS’ preferred Amazon Linux 2023. We manage the deployment of these workers using AWS Auto-Scaling Groups.

Our unusal configuration of these nodes mean that we sometimes trip over configurations which are tricky to get support on from AWS (no criticism of their support team, if I was in their position, I wouldn’t want to try to provide support for a customer’s configuration that was so far outside the recommended configuration either!)

Over the past year, we’ve upgraded EKS1.23 to EKS1.27 and then on to EKS1.31, and we’ve stumbled over a few issues on the way. Here are a couple of notes on the subject, in case they help anyone else in their journey.

All three of the issues below were addressed by running an additional service on the worker nodes in a Systemd timed service which triggers every minute.

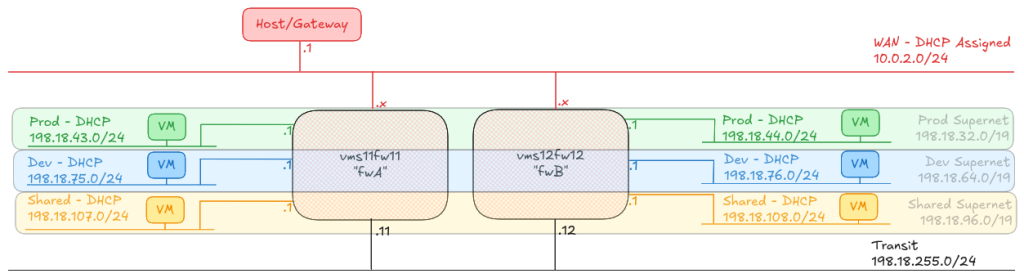

Incorrect routing for the 12th IP address onwards

Something the team found really early on (around EKS 1.18 or somewhere around there) was that the AWS VPC-CNI wasn’t managing the routing tables on the node properly. We raised an issue on the AWS VPC CNI (we were on CentOS 7 at the time) and although AWS said they’d fixed the issue, we currently need to patch the routing tables every minute on our nodes.

What happens?

When you get past the number of IP addresses that a single ENI can have (typically ~12), the AWS VPC-CNI will attach a second interface to the worker, and start adding new IP addresses to that. The VPC-CNI should setup routing for that second interface, but for some reason, in our case, it doesn’t. You can see this happens because the traffic will come in on the second ENI, eth1, but then try to exit the node on the first ENI, eth0, with a tcpdump, like this:

[root@test-i-01234567890abcdef ~]# tcpdump -i any host 192.0.2.123

tcpdump: data link type LINUX_SLL2

dropped privs to tcpdump

tcpdump: verbose output suppressed, use -v[v]... for full protocol decode

listening on any, link-type LINUX_SLL2 (Linux cooked v2), snapshot length 262144 bytes

09:38:07.331619 eth1 In IP ip-192-168-1-100.eu-west-1.compute.internal.41856 > ip-192-0-2-123.eu-west-1.compute.internal.irdmi: Flags [S], seq 1128657991, win 64240, options [mss 1359,sackOK,TS val 2780916192 ecr 0,nop,wscale 7], length 0

09:38:07.331676 eni989c4ec4a56 Out IP ip-192-168-1-100.eu-west-1.compute.internal.41856 > ip-192-0-2-123.eu-west-1.compute.internal.irdmi: Flags [S], seq 1128657991, win 64240, options [mss 1359,sackOK,TS val 2780916192 ecr 0,nop,wscale 7], length 0

09:38:07.331696 eni989c4ec4a56 In IP ip-192-0-2-123.eu-west-1.compute.internal.irdmi > ip-192-168-1-100.eu-west-1.compute.internal.41856: Flags [S.], seq 3367907264, ack 1128657992, win 26847, options [mss 8961,sackOK,TS val 1259768406 ecr 2780916192,nop,wscale 7], length 0

09:38:07.331702 eth0 Out IP ip-192-0-2-123.eu-west-1.compute.internal.irdmi > ip-192-168-1-100.eu-west-1.compute.internal.41856: Flags [S.], seq 3367907264, ack 1128657992, win 26847, options [mss 8961,sackOK,TS val 1259768406 ecr 2780916192,nop,wscale 7], length 0

The critical line here is the last one – it’s come in on eth1 and it’s going out of eth0. Another test here is to look at ip rule

[root@test-i-01234567890abcdef ~]# ip rule

0: from all lookup local

512: from all to 192.0.2.111 lookup main

512: from all to 192.0.2.143 lookup main

512: from all to 192.0.2.66 lookup main

512: from all to 192.0.2.113 lookup main

512: from all to 192.0.2.145 lookup main

512: from all to 192.0.2.123 lookup main

512: from all to 192.0.2.5 lookup main

512: from all to 192.0.2.158 lookup main

512: from all to 192.0.2.100 lookup main

512: from all to 192.0.2.69 lookup main

512: from all to 192.0.2.129 lookup main

1024: from all fwmark 0x80/0x80 lookup main

1536: from 192.0.2.123 lookup 2

32766: from all lookup main

32767: from all lookup default

Notice here that we have two entries from all to 192.0.2.123 lookup main and from 192.0.2.123 lookup 2. Let’s take a look at what lookup 2 gives us, in the routing table

[root@test-i-01234567890abcdef ~]# ip route show table 2

192.0.2.1 dev eth1 scope link

Fix the issue

This is pretty easy – we need to add a default route if one doesn’t already exist. Long before I got here, my boss created a script which first runs ip route show table main | grep default to get the gateway for that interface, then runs ip rule list, looks for each lookup <number> and finally runs ip route add to put the default route on that table, the same as on the main table.

ip route add default via "${GW}" dev "${INTERFACE}" table "${TABLE}"

Is this still needed?

I know when we upgraded our cluster from EKS1.23 to EKS1.27, this script was still needed. When I’ve just checked a worker running EKS1.31, after around 12 hours of running, and a second interface being up, it’s not been needed… so perhaps we can deprecate this script?

Dropping packets to the containers due to Martians

When we upgraded our cluster from EKS1.23 to EKS1.27 we also changed a lot of the infrastructure under the surface (AlmaLinux 9 from CentOS7, Containerd and Runc from Docker, CGroups v2 from CGroups v1, and so on). We also moved from using an AWS Elastic Load Balancer (ELB) or “Classic Load Balancer” to AWS Network Load Balancer (NLB).

Following the upgrade, we started seeing packets not arriving at our containers and the system logs on the node were showing a lot of martian source messages, particularly after we configured our NLB to forward original IP source addresses to the nodes.

What happens

One thing we noticed was that each time we added a new pod to the cluster, it added a new eni[0-9a-f]{11} interface, but the sysctl value for net.ipv4.conf.<interface>.rp_filter (return path filtering – basically, should we expect the traffic to be arriving at this interface for that source?) in sysctl was set to 1 or “Strict mode” where the source MUST be the coming from the best return path for the interface it arrived on. The AWS VPC-CNI is supposed to set this to 2 or “Loose mode” where the source must be reachable from any interface.

In this case you’d tell this because you’d see this in your system journal (assuming you’ve got net.ipv4.conf.all.log_martians=1 configured):

Dec 03 10:01:19 test-i-01234567890abcdef kernel: IPv4: martian source 192.168.1.100 from 192.0.2.123, on dev eth1

The net result is that packets would be dropped by the host at this point, and they’d never be received by the containers in the pods.

Fix the issue

This one is also pretty easy. We run sysctl -a and loop through any entries which match net.ipv4.conf.([^\.]+).rp_filter = (0|1) and then, if we find any, we run sysctl -w net.ipv4.conf.\1.rp_filter = 2 to set it to the correct value.

Is this still needed?

Yep, absolutely. As of our latest upgrade to EKS1.31, if this value isn’t set, then it will drop packets. VPC-CNI should be fixing this, but for some reason it doesn’t. And setting the conf.ipv4.all.rp_filter to 2 doesn’t seem to make a difference, which is contrary to the documentation in the relevant Kernel documentation.

After 12 IP addresses are assigned to a node, Kubernetes services stop working for some pods

This was pretty weird. When we upgraded to EKS1.31 on our smallest cluster we initially thought we had an issue with CoreDNS, in that it sometimes wouldn’t resolve IP addresses for services (DNS names for services inside the cluster are resolved by <servicename>.<namespace>.svc.cluster.local to an internal IP address for the cluster – in our case, in the range 172.20.0.0/16). We upgraded CoreDNS to the EKS1.31 recommended version, v1.11.3-eksbuild.2 and that seemed to fix things… until we upgraded our next largest cluster, and things REALLY went wrong, but only when we had increased to over 12 IP addresses assigned to the node.

You might see this as frequent restarts of a container, particularly if you’re reliant on another service to fulfil an init container or the liveness/readyness check.

What happens

EKS1.31 moves KubeProxy from iptables or ipvs mode to nftables – a shift we had to make internally as AlmaLinux 9 no longer supports iptables mode, and ipvs is often quite flaky, especially when you have a lot of pod movements.

With a single interface and up to 11 IP addresses assigned to that interface, everything runs fine, but the moment we move to that second interface, much like in the first case above, we start seeing those pods attached to the second+ interface being unable to resolve service addresses. On further investigation, doing a dig from a container inside that pod to the service address of the CoreDNS service 172.20.0.10 would timeout, but a dig against the actual pod address 192.0.2.53 would return a valid response.

Under the surface, on each worker, KubeProxy adds a rule to nftables to say “if you try and reach 172.20.0.10, please instead direct it to 192.0.2.53”. As the containers fluctuate inside the cluster, KubeProxy is constantly re-writing these rules. For whatever reason though, KubeProxy currently seems unable to determine that a second or subsequent interface has been added, and so these rules are not applied to the pods attached to that interface…. or at least, that’s what it looks like!

Fix the issue

In this case, we wrote a separate script which was also triggered every minute. This script looks to see if the interfaces have changed by running ip link and looking for any interfaces called eth[0-9]+ which have changed, and then if it has, it runs crictl pods (which lists all the running pods in Containerd), looks for the Pod ID of KubeProxy, and then runs crictl stopp <podID> [1] and crictl rmp <podID> [1] to stop and remove the pod, forcing kubelet to restart the KubeProxy on the node.

[1] Yes, they aren’t typos, stopp means “stop the pod” and rmp means “remove the pod”, and these are different to stop and rm which relate to the container.

Is this still needed?

As this was what I was working on all-day yesterday, yep, I’d say so 😊 – in all seriousness though, if this hadn’t been a high-priority issue on the cluster, I might have tried to upgrade the AWS VPC-CNI and KubeProxy add-ons to a later version, to see if the issue was resolved, but at this time, we haven’t done that, so maybe I’ll issue a retraction later 😂

Featured image is “Apoptosis Network (alternate)” by “Simon Cockell” on Flickr and is released under a CC-BY license.

I just want to note that Will Jessop noticed a significant typo in this post within an hour of my posting. The post was updated accordingly. Will is awesome and super lovely. Thanks Will!