Just so you know. This is a long article to explain my wandering path through understanding Kubernetes (K8S). It’s not an article to explain to you how to use K8S with your project. I hit a lot of blockers, due to the stack I’m using and I document them all. This means there’s a lot of noise and not a whole lot of Signal.

In a previous blog post I created a docker-compose.yml file for a PHP based web application. Now that I have a working Kubernetes environment, I wanted to port the configuration files into Kubernetes.

Initially, I was pointed at Kompose, a tool for converting docker-compose files to Kubernetes YAML formatted files, and, in fact, this gives me 99% of what I need… except, the current version uses beta API version flags for some of it’s outputted files, and this isn’t valid for the version of Kubernetes I’m running. So, let’s wind things back a bit, and find out what you need to do to use kompose first and then we can tweak the output file next.

Note: I’m running all these commands as root. There’s a bit of weirdness going on because I’m using the snap of Docker and I had a few issues with running these commands as a user… While I could have tried to get to the bottom of this with sudo and watching logs, I just wanted to push on… Anyway.

Here’s our “simple” docker-compose file.

version: '3'

services:

db:

build:

context: .

dockerfile: mariadb/Dockerfile

image: localhost:32000/db

restart: always

environment:

MYSQL_ROOT_PASSWORD: a_root_pw

MYSQL_USER: a_user

MYSQL_PASSWORD: a_password

MYSQL_DATABASE: a_db

expose:

- 3306

nginx:

build:

context: .

dockerfile: nginx/Dockerfile

image: localhost:32000/nginx

ports:

- 1980:80

phpfpm:

build:

context: .

dockerfile: phpfpm/Dockerfile

image: localhost:32000/phpfpmThis has three components – the MariaDB database, the nginx web server and the PHP-FPM CGI service that nginx consumes. The database service exposes a port (3306) to other containers, with a set of hard-coded credentials (yep, that’s not great… working on that one!), while the nginx service opens port 1980 to port 80 in the container. So far, so … well, kinda sensible :)

If we run kompose convert against this docker-compose file, we get five files created; db-deployment.yaml, nginx-deployment.yaml, phpfpm-deployment.yaml, db-service.yaml and nginx-service.yaml. If we were to run kompose up on these, we get an error message…

Well, actually, first, we get a whole load of “INFO” and “WARN” lines up while kompose builds and pushes the containers into the MicroK8S local registry (a registry is a like a package repository, for containers), which is served by localhost:32000 (hence all the image: localhost:3200/someimage lines in the docker-compose.yml file), but at the end, we get (today) this error:

INFO We are going to create Kubernetes Deployments, Services and PersistentVolumeClaims for your Dockerized application. If you need different kind of resources, use the 'kompose convert' and 'kubectl create -f' commands instead.

FATA Error while deploying application: Get http://localhost:8080/api: dial tcp 127.0.0.1:8080: connect: connection refusedUh oh! Well, this is a known issue at least! Kubernetes used to use, by default, http on port 8080 for it’s service, but now it uses https on port 6443. Well, that’s what I thought! In this issue on the MicroK8S repo, it says that it uses a different port, and you should use microk8s.kubectl cluster-info to find the port… and yep… Kubernetes master is running at https://127.0.0.1:16443. Bah.

root@microk8s-a:~/glowing-adventure# microk8s.kubectl cluster-info

Kubernetes master is running at https://127.0.0.1:16443

Heapster is running at https://127.0.0.1:16443/api/v1/namespaces/kube-system/services/heapster/proxy

CoreDNS is running at https://127.0.0.1:16443/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy

Grafana is running at https://127.0.0.1:16443/api/v1/namespaces/kube-system/services/monitoring-grafana/proxy

InfluxDB is running at https://127.0.0.1:16443/api/v1/namespaces/kube-system/services/monitoring-influxdb:http/proxySo, we export the KUBERNETES_MASTER environment variable, which was explained in that known issue I mentioned before, and now we get a credential prompt:

Please enter Username:Oh no, again! I don’t have credentials!! Fortunately the MicroK8S issue also tells us how to find those! You run microk8s.config and it tells you the username!

roo@microk8s-a:~/glowing-adventure# microk8s.config

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: <base64-data>

server: https://10.0.2.15:16443

name: microk8s-cluster

contexts:

- context:

cluster: microk8s-cluster

user: admin

name: microk8s

current-context: microk8s

kind: Config

preferences: {}

users:

- name: admin

user:

username: admin

password: QXdUVmN3c3AvWlJ3bnRmZVJmdFhpNkJ3cDdkR3dGaVdxREhuWWo0MmUvTT0KSo, our username is “admin” and our password is … well, in this case a string starting QX and ending 0K but yours will be different!

We run kompose up again, and put in the credentials… ARGH!

FATA Error while deploying application: Get https://127.0.0.1:16443/api: x509: certificate signed by unknown authorityWell, now, that’s no good! Fortunately, a quick Google later, and up pops this Stack Overflow suggestion (mildly amended for my circumstances):

openssl s_client -showcerts -connect 127.0.0.1:16443 < /dev/null | sed -ne '/-BEGIN CERTIFICATE-/,/-END CERTIFICATE-/p' | sudo tee /usr/local/share/ca-certificates/k8s.crt

update-ca-certificates

systemctl restart snap.docker.dockerdRight then. Let’s run that kompose up statement again…

INFO We are going to create Kubernetes Deployments, Services and PersistentVolumeClaims for your Dockerized application. If you need different kind of resources, use the 'kompose convert' and 'kubectl create -f' commands instead.

Please enter Username:

Please enter Password:

INFO Deploying application in "default" namespace

INFO Successfully created Service: nginx

FATA Error while deploying application: the server could not find the requested resourceBah! What resource do I need? Well, actually, there’s a bug in 1.20.0 of Kompose, and it should be fixed in 1.21.0. The “resource” it’s talking about is, I think, that one of the APIs refuses to process the converted YAML files. As a result, the “resource” is the service that won’t start. So, instead, let’s convert the file into the output YAML files, and then take a peak at what’s going wrong.

root@microk8s-a:~/glowing-adventure# kompose convert

INFO Kubernetes file "nginx-service.yaml" created

INFO Kubernetes file "db-deployment.yaml" created

INFO Kubernetes file "nginx-deployment.yaml" created

INFO Kubernetes file "phpfpm-deployment.yaml" createdSo far, so good! Now let’s run kubectl apply with each of these files.

root@microk8s-a:~/glowing-adventure# kubectl apply -f nginx-service.yaml

Warning: kubectl apply should be used on resource created by either kubectl create --save-config or kubectl apply

service/nginx configured

root@microk8s-a:~# kubectl apply -f nginx-deployment.yaml

error: unable to recognize "nginx-deployment.yaml": no matches for kind "Deployment" in version "extensions/v1beta1"Apparently the service files are all OK, the problem is in the deployment files. Hmm OK, let’s have a look at what could be wrong. Here’s the output file:

root@microk8s-a:~/glowing-adventure# cat nginx-deployment.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

annotations:

kompose.cmd: kompose convert

kompose.version: 1.20.0 (f3d54d784)

creationTimestamp: null

labels:

io.kompose.service: nginx

name: nginx

spec:

replicas: 1

strategy: {}

template:

metadata:

annotations:

kompose.cmd: kompose convert

kompose.version: 1.20.0 (f3d54d784)

creationTimestamp: null

labels:

io.kompose.service: nginx

spec:

containers:

- image: localhost:32000/nginx

name: nginx

ports:

- containerPort: 80

resources: {}

restartPolicy: Always

status: {}Well, the extensions/v1beta1 API version doesn’t seem to support “Deployment” options any more, so let’s edit it to change that to what the official documentation example shows today. We need to switch to using the apiVersion: apps/v1 value. Let’s see what happens when we make that change!

root@microk8s-a:~/glowing-adventure# kubectl apply -f nginx-deployment.yaml

error: error validating "nginx-deployment.yaml": error validating data: ValidationError(Deployment.spec): missing required field "selector" in io.k8s.api.apps.v1.DeploymentSpec; if you choose to ignore these errors, turn validation off with --validate=falseHmm this seems to be a fairly critical issue. A selector basically tells the orchestration engine which images we want to be deployed. Let’s go back to the official example. So, we need to add the “selector” value in the “spec” block, at the same level as “template”, and it needs to match the labels we’ve specified. It also looks like we don’t need most of the metadata that kompose has given us. So, let’s adjust the deployment to look a bit more like that example.

root@microk8s-a:~/glowing-adventure# cat nginx-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx

name: nginx

spec:

replicas: 1

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- image: localhost:32000/nginx

name: nginx

ports:

- containerPort: 80

resources: {}

restartPolicy: AlwaysFab. And what happens when we run it?

root@microk8s-a:~/glowing-adventure# kubectl apply -f nginx-deployment.yaml

deployment.apps/nginx createdWoohoo! Let’s apply all of these now.

root@microk8s-a:~/glowing-adventure# for i in db-deployment.yaml nginx-deployment.yaml nginx-service.yaml phpfpm-deployment.yaml; do kubectl apply -f $i ; done

deployment.apps/db created

deployment.apps/nginx unchanged

service/nginx unchanged

deployment.apps/phpfpm createdOh, hang on a second, that service (service/nginx) is unchanged, but we changed the label from io.kompose.service: nginx to app: nginx, so we need to fix that. Let’s open it up and edit it!

apiVersion: v1

kind: Service

metadata:

annotations:

kompose.cmd: kompose convert

kompose.version: 1.20.0 (f3d54d784)

creationTimestamp: null

labels:

io.kompose.service: nginx

name: nginx

spec:

ports:

- name: "1980"

port: 1980

targetPort: 80

selector:

io.kompose.service: nginx

status:

loadBalancer: {}Ah, so this has the “annotations” field too, in the metadata, and, as suspected, it’s got the io.kompose.service label as the selector. Hmm OK, let’s fix that.

root@microk8s-a:~/glowing-adventure# cat nginx-service.yaml

apiVersion: v1

kind: Service

metadata:

labels:

app: nginx

name: nginx

spec:

ports:

- name: "1980"

port: 1980

targetPort: 80

selector:

app: nginx

status:

loadBalancer: {}Much better. And let’s apply it…

root@microk8s-a:~/glowing-adventure# kubectl apply -f nginx-service.yaml

service/nginx configuredFab! So, let’s review the state of the deployments, the services, the pods and the replication sets.

root@microk8s-a:~/glowing-adventure# kubectl get deploy

NAME READY UP-TO-DATE AVAILABLE AGE

db 1/1 1 1 8m54s

nginx 0/1 1 0 8m53s

phpfpm 1/1 1 1 8m48sHmm. That doesn’t look right.

root@microk8s-a:~/glowing-adventure# kubectl get pod

NAME READY STATUS RESTARTS AGE

db-f78f9f69b-grqfz 1/1 Running 0 9m9s

nginx-7774fcb84c-cxk4v 0/1 CrashLoopBackOff 6 9m8s

phpfpm-66945b7767-vb8km 1/1 Running 0 9m3s

root@microk8s-a:~# kubectl get rs

NAME DESIRED CURRENT READY AGE

db-f78f9f69b 1 1 1 9m18s

nginx-7774fcb84c 1 1 0 9m17s

phpfpm-66945b7767 1 1 1 9m12sYep. What does “CrashLoopBackOff” even mean?! Let’s check the logs. We need to ask the pod itself, not the deployment, so let’s use the kubectl logs command to ask.

root@microk8s-a:~/glowing-adventure# kubectl logs nginx-7774fcb84c-cxk4v

2020/01/17 08:08:50 [emerg] 1#1: host not found in upstream "phpfpm" in /etc/nginx/conf.d/default.conf:10

nginx: [emerg] host not found in upstream "phpfpm" in /etc/nginx/conf.d/default.conf:10Hmm. That’s not good. We were using the fact that Docker just named everything for us in the docker-compose file, but now in Kubernetes, we need to do something different. At this point I ran out of ideas. I asked on the McrTech slack for advice. I was asked to run this command, and would you look at that, there’s nothing for nginx to connect to.

root@microk8s-a:~/glowing-adventure# kubectl get service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.152.183.1 <none> 443/TCP 24h

nginx ClusterIP 10.152.183.62 <none> 1980/TCP 9m1sIt turns out that I need to create a service for each of the deployments. So, now I have a separate service for each one. I copied the nginx-service.yaml file into db-service.yaml and phpfpm-service.yaml, edited the files and now… tada!

root@microk8s-a:~/glowing-adventure# kubectl get service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

db ClusterIP 10.152.183.61 <none> 3306/TCP 5m37s

kubernetes ClusterIP 10.152.183.1 <none> 443/TCP 30h

nginx ClusterIP 10.152.183.62 <none> 1980/TCP 5h54m

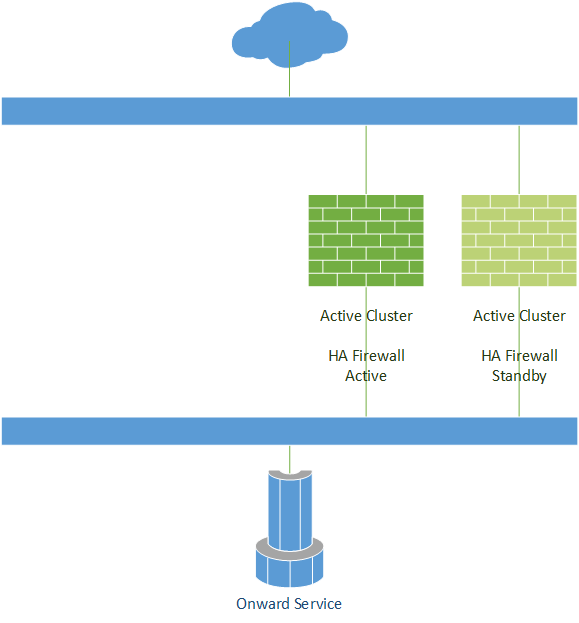

phpfpm ClusterIP 10.152.183.69 <none> 9000/TCP 5m41sBut wait… How do I actually address nginx now? Huh. No external-ip (not even “pending”, which is what I ended up with), no ports to talk to. Uh oh. Now I need to understand how to hook this service up to the public IP of this node. Ahh, see up there it says “ClusterIP”? That means “this service is only available INSIDE the cluster”. If I change this to “NodePort” or “LoadBalancer”, it’ll attach that port to the external interface.

What’s the difference between “NodePort” and “LoadBalancer”? Well, according to this page, if you are using a managed Public Cloud service that supports an external load balancer, then putting this to “LoadBalancer” should attach your “NodePort” to the provider’s Load Balancer automatically. Otherwise, you need to define the “NodePort” value in your config (which must be a value between 30000 and 32767, although that is configurable for the node). Once you’ve done that, you can hook your load balancer up to that port, for example Client -> Load Balancer IP (TCP/80) -> K8S Cluster IP (e.g. TCP/31234)

So, how does this actually look. I’m going to use the “LoadBalancer” option, because if I ever deploy this to “live”, I want it to integrate with the load balancer, but for now, I can cope with addressing a “high port”. Right, well, let me open back up that nginx-service.yaml, and make the changes.

root@microk8s-a:~/glowing-adventure# cat nginx-service.yaml

apiVersion: v1

kind: Service

metadata:

labels:

app: nginx

name: nginx

spec:

type: LoadBalancer

ports:

- name: nginx

nodePort: 30000

port: 1980

targetPort: 80

selector:

app: nginx

status:

loadBalancer: {}The key parts here are the lines type: LoadBalancer and nodePort: 30000 under spec: and ports: respectively. Note that I can use, at this point type: LoadBalancer and type: NodePort interchangably, but, as I said, if you were using this in something like AWS or Azure, you might want to do it differently!

So, now I can curl http://192.0.2.100:30000 (where 192.0.2.100 is the address of my “bridged interface” of K8S environment) and get a response from my PHP application, behind nginx, and I know (from poking at it a bit) that it works with my Database.

OK, one last thing. I don’t really want lots of little files which have got config items in. I quite liked the docker-compose file as it was, because it had all the services in as one block, and I could run “docker-compose up”, but the kompose script split it out into lots of pieces. In Kubernetes, if the YAML file it loads has got a divider in it (a line like this: ---) then it stops parsing it at that point, and starts reading the file after that as a new file. Like this I could have the following layout:

apiVersion: apps/v1

kind: Deployment

more: stuff

---

apiVersion: v1

kind: Service

more: stuff

---

apiVersion: apps/v1

kind: Deployment

more: stuff

---

apiVersion: v1

kind: Service

more: stuffBut, thinking about it, I quite like having each piece logically together, so I really want db.yaml, nginx.yaml and phpfpm.yaml, where each of those files contains both the deployment and the service. So, let’s do that. I’ll do one file, so it makes more sense, and then show you the output.

root@microk8s-a:~/glowing-adventure# mkdir -p k8s

root@microk8s-a:~/glowing-adventure# mv db-deployment.yaml k8s/db.yaml

root@microk8s-a:~/glowing-adventure# echo "---" >> k8s/db.yaml

root@microk8s-a:~/glowing-adventure# cat db-service.yaml >> k8s/db.yaml

root@microk8s-a:~/glowing-adventure# rm db-service.yaml

root@microk8s-a:~/glowing-adventure# cat k8s/db.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: db

name: db

spec:

replicas: 1

selector:

matchLabels:

app: db

template:

metadata:

labels:

app: db

spec:

containers:

- env:

- name: MYSQL_DATABASE

value: a_db

- name: MYSQL_PASSWORD

value: a_password

- name: MYSQL_ROOT_PASSWORD

value: a_root_pw

- name: MYSQL_USER

value: a_user

image: localhost:32000/db

name: db

resources: {}

restartPolicy: Always

---

apiVersion: v1

kind: Service

metadata:

labels:

app: db

name: db

spec:

ports:

- name: mariadb

port: 3306

targetPort: 3306

selector:

app: db

status:

loadBalancer: {}So, now, if I do kubectl apply -f k8s/db.yaml I’ll get this output:

root@microk8s-a:~/glowing-adventure# kubectl apply -f k8s/db.yaml

deployment.apps/db unchanged

service/db unchangedYou can see the final files in the git repo for this set of tests.

Next episode, I’ll start looking at making my application scale (as that’s the thing that Kubernetes is known for) and having more than one K8S node to house my K8S pods!

Featured image is “So many coats…” by “Scott Griggs” on Flickr and is released under a CC-BY-ND license.