I’m currently building a Proof Of Value (POV) environment for a product, and one of the things I needed in my environment was an Active Directory domain.

To do this in AWS, I had to do the following steps:

- Build my Domain Controller

- Install Windows

- Set the hostname (Reboot)

- Promote the machine to being a Domain Controller (Reboot)

- Create a domain user

- Build my Member Server

- Install Windows

- Set the hostname (Reboot)

- Set the DNS client to point to the Domain Controller

- Join the server to the domain (Reboot)

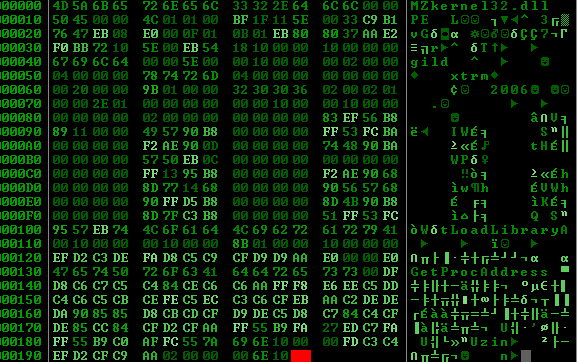

To make this work, I had to find a way to trigger build steps after each reboot. I was working with Windows 2012R2, Windows 2016 and Windows 2019, so the solution had to be cross-version. Fortunately I found this script online! That version was great for Windows 2012R2, but didn’t cover Windows 2016 or later… So let’s break down what I’ve done!

In your userdata field, you need to have two sets of XML strings, as follows:

<persist>true</persist>

<powershell>

$some = "powershell code"

</powershell>The first block says to Windows 2016+ “keep trying to run this script on each boot” (note that you need to stop it from doing non-relevant stuff on each boot – we’ll get to that in a second!), and the second bit is the PowerShell commands you want it to run. The rest of this now will focus just on the PowerShell block.

$path= 'HKLM:\Software\UserData'

if(!(Get-Item $Path -ErrorAction SilentlyContinue)) {

New-Item $Path

New-ItemProperty -Path $Path -Name RunCount -Value 0 -PropertyType dword

}

$runCount = Get-ItemProperty -Path $path -Name Runcount -ErrorAction SilentlyContinue | Select-Object -ExpandProperty RunCount

if($runCount -ge 0) {

switch($runCount) {

0 {

$runCount = 1 + [int]$runCount

Set-ItemProperty -Path $Path -Name RunCount -Value $runCount

if ($ver -match 2012) {

#Enable user data

$EC2SettingsFile = "$env:ProgramFiles\Amazon\Ec2ConfigService\Settings\Config.xml"

$xml = [xml](Get-Content $EC2SettingsFile)

$xmlElement = $xml.get_DocumentElement()

$xmlElementToModify = $xmlElement.Plugins

foreach ($element in $xmlElementToModify.Plugin)

{

if ($element.name -eq "Ec2HandleUserData") {

$element.State="Enabled"

}

}

$xml.Save($EC2SettingsFile)

}

$some = "PowerShell Script"

}

}

}Whew, what a block! Well, again, we can split this up into a couple of bits.

In the first few lines, we build a pointer, a note which says “We got up to here on our previous boots”. We then read that into a variable and find that number and execute any steps in the block with that number. That’s this block:

$path= 'HKLM:\Software\UserData'

if(!(Get-Item $Path -ErrorAction SilentlyContinue)) {

New-Item $Path

New-ItemProperty -Path $Path -Name RunCount -Value 0 -PropertyType dword

}

$runCount = Get-ItemProperty -Path $path -Name Runcount -ErrorAction SilentlyContinue | Select-Object -ExpandProperty RunCount

if($runCount -ge 0) {

switch($runCount) {

}

}The next part (and you’ll repeat it for each “number” of reboot steps you need to perform) says “increment the number” then “If this is Windows 2012, remind the userdata handler that the script needs to be run again next boot”. That’s this block:

0 {

$runCount = 1 + [int]$runCount

Set-ItemProperty -Path $Path -Name RunCount -Value $runCount

if ($ver -match 2012) {

#Enable user data

$EC2SettingsFile = "$env:ProgramFiles\Amazon\Ec2ConfigService\Settings\Config.xml"

$xml = [xml](Get-Content $EC2SettingsFile)

$xmlElement = $xml.get_DocumentElement()

$xmlElementToModify = $xmlElement.Plugins

foreach ($element in $xmlElementToModify.Plugin)

{

if ($element.name -eq "Ec2HandleUserData") {

$element.State="Enabled"

}

}

$xml.Save($EC2SettingsFile)

}

}In fact, it’s fair to say that in my userdata script, this looks like this:

$path= 'HKLM:\Software\UserData'

if(!(Get-Item $Path -ErrorAction SilentlyContinue)) {

New-Item $Path

New-ItemProperty -Path $Path -Name RunCount -Value 0 -PropertyType dword

}

$runCount = Get-ItemProperty -Path $path -Name Runcount -ErrorAction SilentlyContinue | Select-Object -ExpandProperty RunCount

if($runCount -ge 0) {

switch($runCount) {

0 {

${file("templates/step.tmpl")}

${templatefile(

"templates/rename_windows.tmpl",

{

hostname = "SomeMachine"

}

)}

}

1 {

${file("templates/step.tmpl")}

${templatefile(

"templates/join_ad.tmpl",

{

dns_ipv4 = "192.0.2.1",

domain_suffix = "ad.mycorp",

join_account = "ad\someuser",

join_password = "SomePassw0rd!"

}

)}

}

}

}Then, after each reboot, you need a new block. I have a block to change the computer name, a block to join the machine to the domain, and a block to install an software that I need.

Featured image is “New shoes” by “Morgaine” on Flickr and is released under a CC-BY-SA license.